Someone has to be responsible for time.

This is our responsibility.

Discover how we got here below

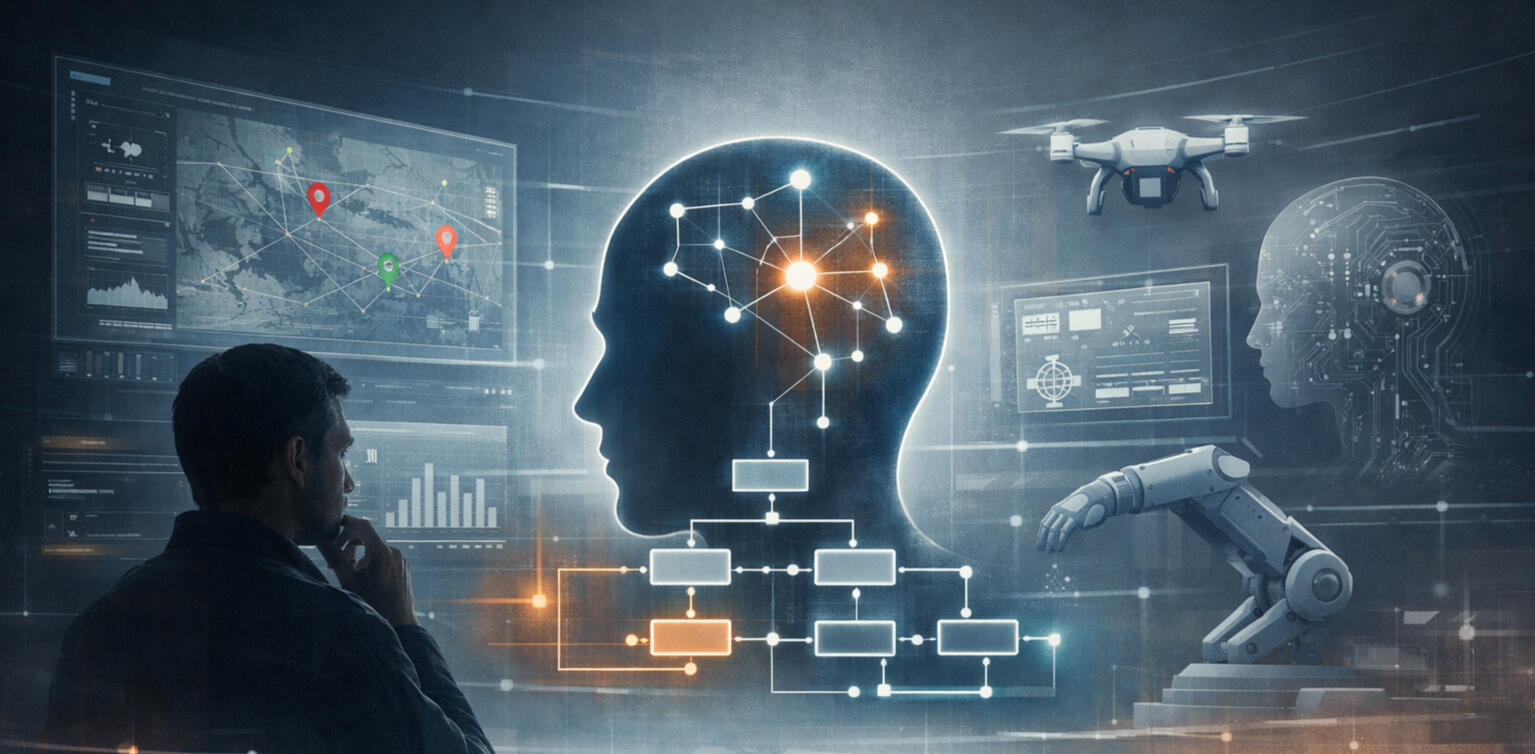

Intelligence shapes attention, risk, and trust.

This timeline traces how AI evolved from small everyday helpers to quiet, dependable systems that fade in the background.

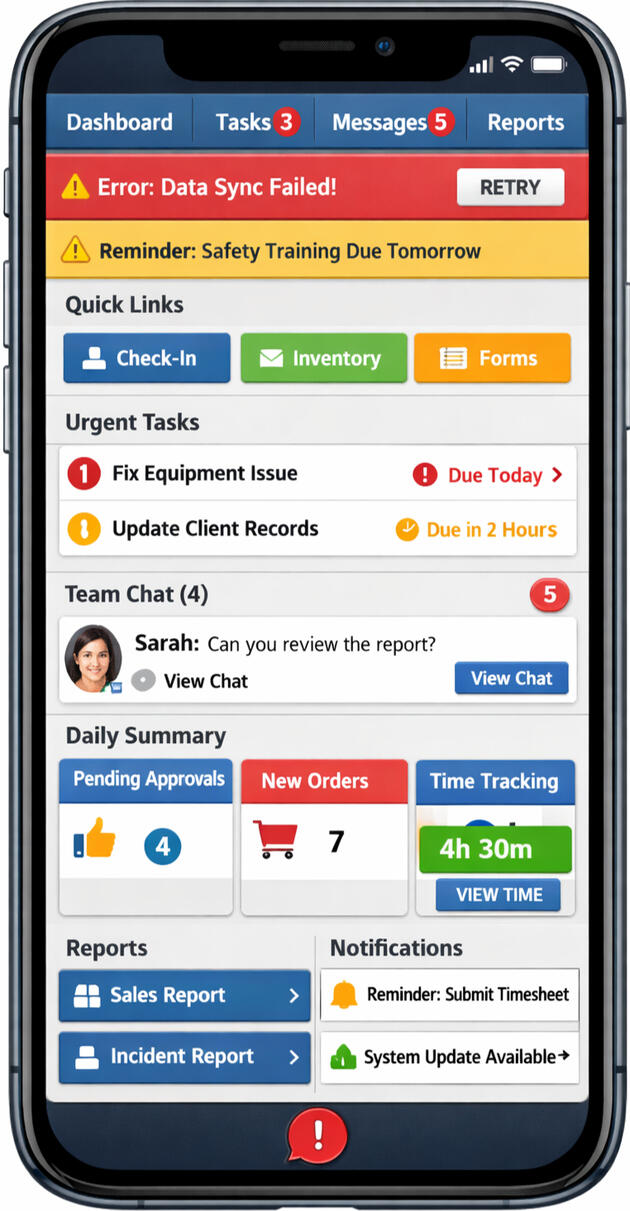

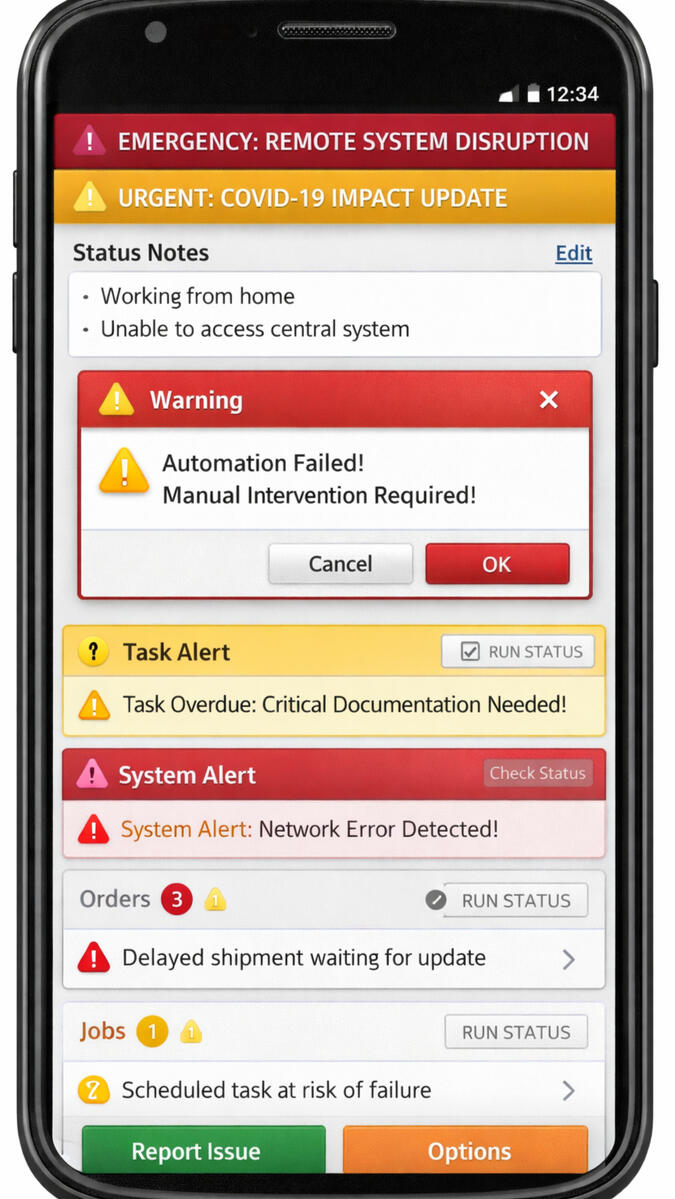

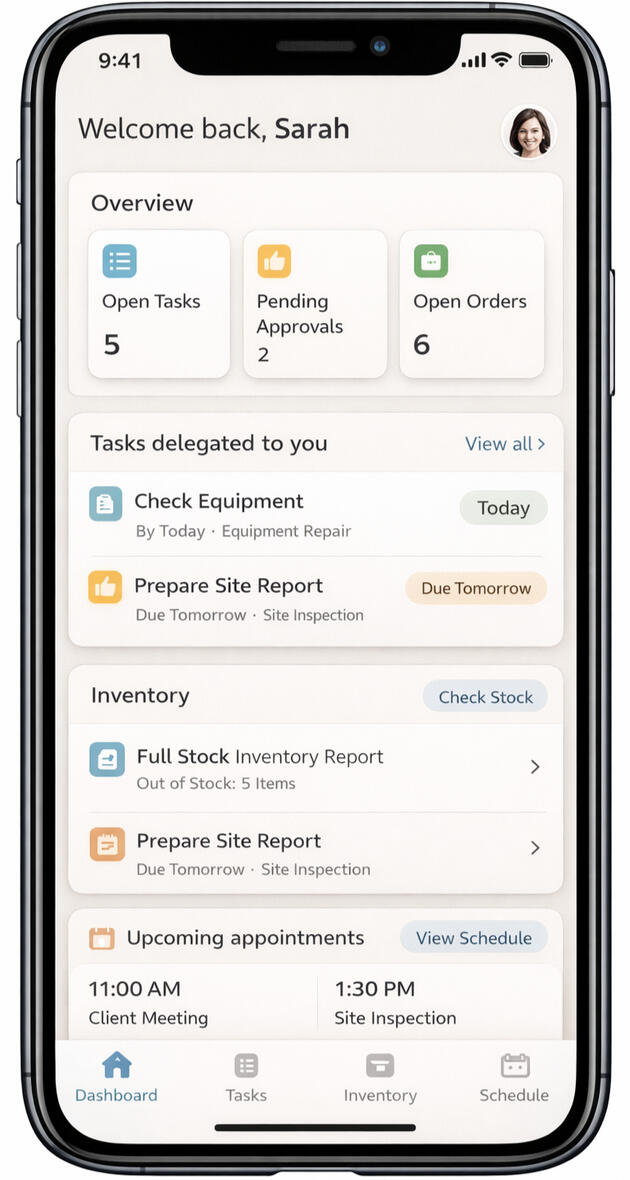

Interfaces reflect how much strain we expect humans to carry.

This timeline shows how screens evolved from survival tools to calm systems.

Words shape trust, stress, and authority.

This timeline traces how system language moved from cold instructions to human-centered clarity.

How work actually moves matters more than where it’s stored.

This timeline shows the shift from fragmented reporting to coordinated, context-aware systems.

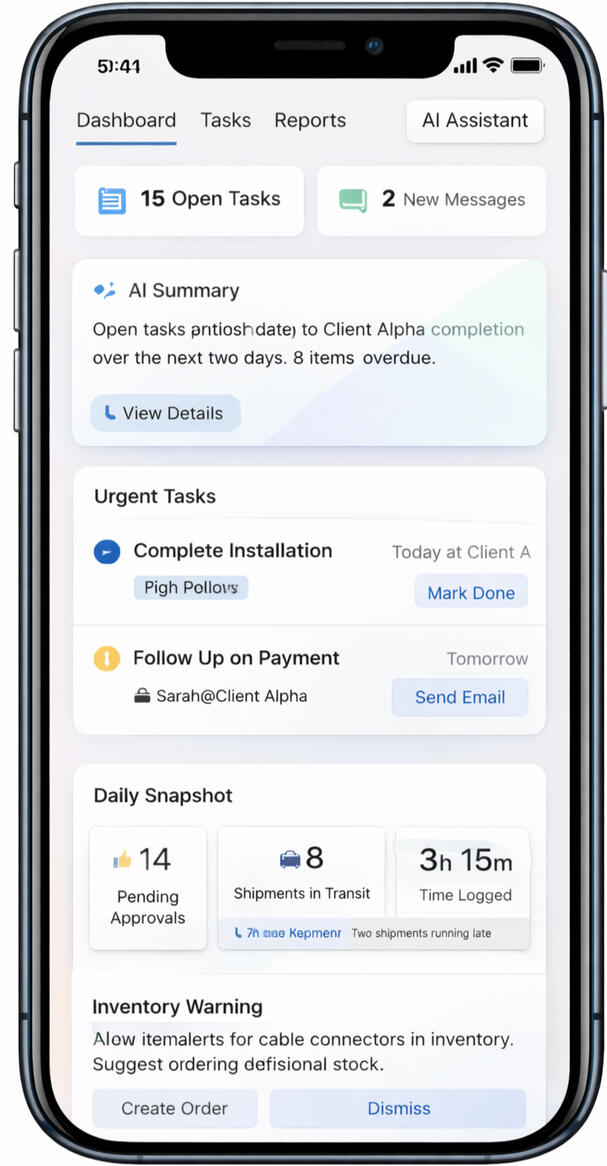

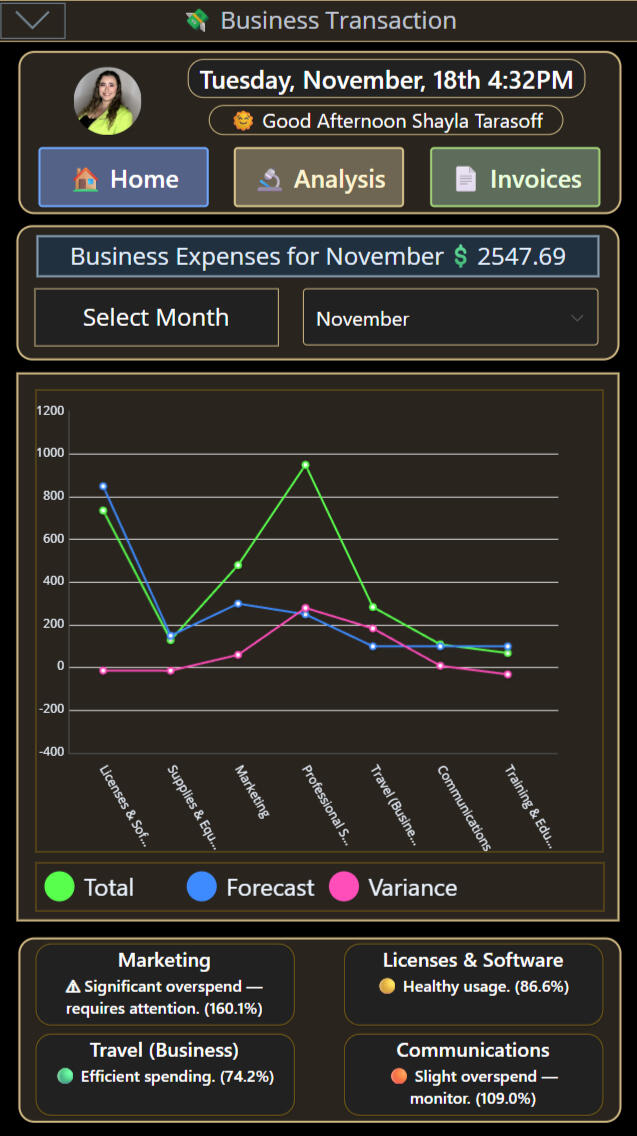

Demonstration Notice

All interface visuals shown here are illustrative and generated for educational purposes.

Only CortexForge solution demonstrations represent live, working systems.

CortexForge Library

The Invisible Intelligence

Systems that remember

the human inside them

The Behaviour a

System Assigns

Task Automation to Psychological Foresight

The Parallel Enterprise: Data Sovereignty in Practice

Ethical AI: Termination Is Governance

Understand CortexForge

Core Division

Explore the Divisions

Minds Behind CortexForge

The Behaviour a

System Assigns

Most leaders assume systems are neutral. But people rarely experience them as neutral.An interface always sets a tone — and that tone shapes how safe it feels to act.A workflow doesn’t just organize tasks. It quietly signals who the user is supposed to be inside the environment. And when that signal shifts, behavior often shifts with it.This is Identity Architecture — the psychological layer beneath UX.It explains something almost every organization sees, but rarely names:The same person can produce high-quality work in one system — and low-quality work in another — without “changing” at all.The environment changed. The identity shifted. The behavior followed.

___________________________________The Invisible Role AssignmentHave you ever noticed how the same person behaves differently depending on the system they’re using?In one tool, they’re calm and precise. In another, they hesitate, rush, avoid, or go silent.Same employee. Same intelligence. Same intent.Different system.Because every interface communicates a role before the user reads a single word:• “You’re a risk.” → defensiveness, hesitation, under-reporting.• “You’re being tested.” → fear of mistakes, avoidance, learned helplessness.• “You’re an operator.” → clarity, pride, ownership.Most organizations believe they’re deploying a form or a workflow.But the human nervous system receives something else first: status, safety, and whether this environment will punish uncertainty.That interpretation shapes behavior long before policy ever does.

___________________________________When Interfaces Feel Like TestsMost people don’t fear new tools.What they fear is feeling confused, exposed, or quietly blamed when they don’t get it right immediately.This is where Material Intelligence matters.Because the texture of a system isn’t abstract — it’s carried through surface.Concrete doesn’t invite softness. Fabric doesn’t demand vigilance.Digital environments work the same way.A sterile, harsh interface can feel like concrete — rigid, evaluative, unforgiving. A coherent, calm interface can feel like fabric — supportive, legible, non-punitive.And this is also where symbolism matters.Under pressure, the brain doesn’t want to read. It wants to recognize.A disciplined symbol layer reduces hesitation. It reduces wrong clicks. It lowers the fear of “doing it wrong.”That isn’t aesthetic preference. It’s nervous system design.

___________________________________The Shift We Actually NeedHuman-centered design reduces cognitive load. Trauma-informed design reduces reactivation and protects dignity under pressure.But together, they do something deeper:They restore a person’s sense of capability inside the environment.And when capability feels intact, quality follows.The best systems don’t just manage work. They protect the person doing it.Because when identity feels safe, performance compounds. When identity feels threatened, even talented people shrink.

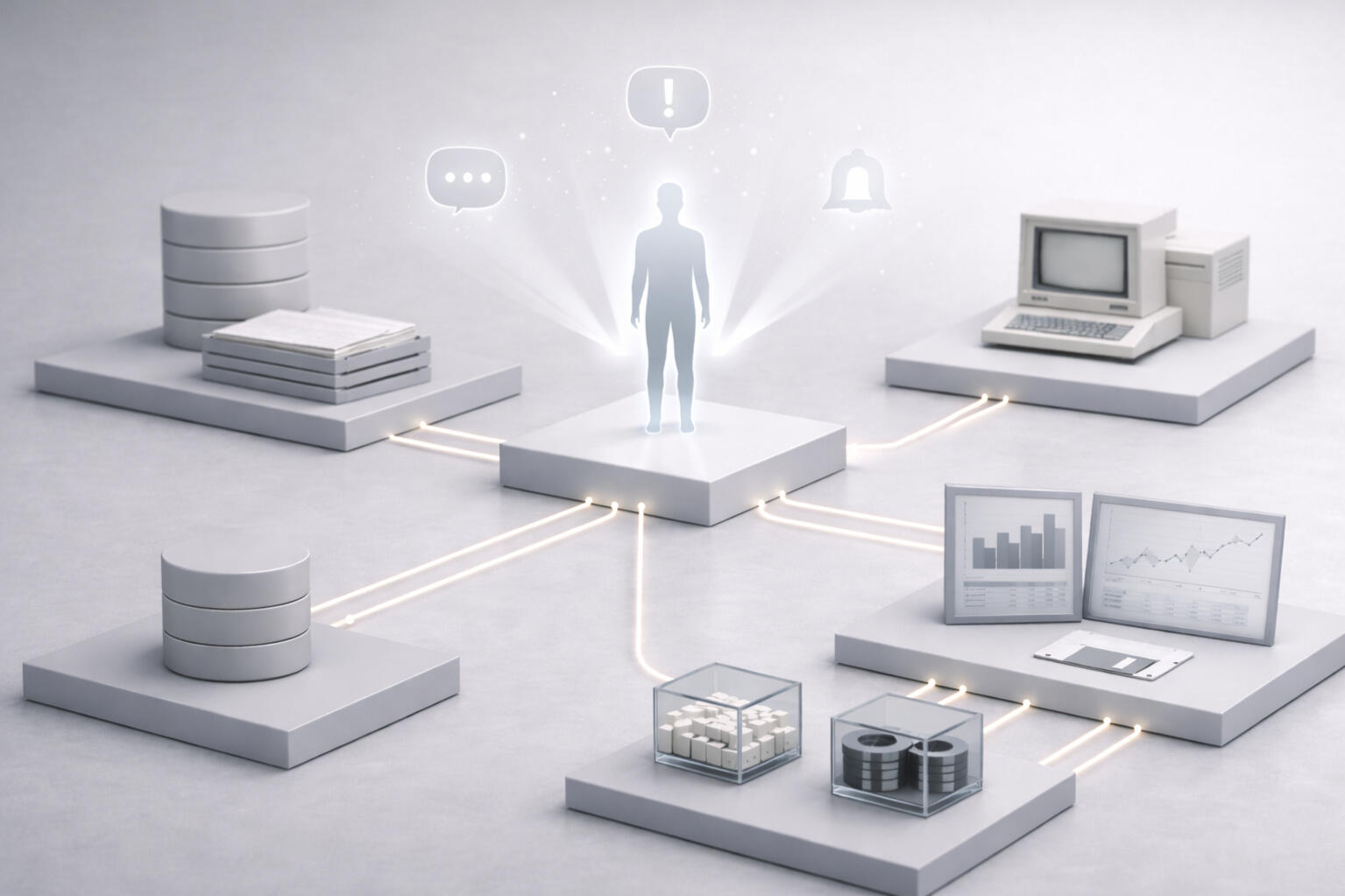

Systems that remember the Human Inside Them

Have you ever opened a system that was supposed to help — and immediately felt behind?More dashboards. More workflows. More tools meant to simplify work.Yet for many people, work doesn’t feel lighter. It feels heavier.I see this pattern often: capable, thoughtful people spending more energy navigating systems than doing meaningful work. Adapting. Relearning. Trying to keep up. Over time, focus erodes. Fatigue replaces confidence. Not because people are resistant — but because constant recalibration takes a real human toll.The issue isn’t technology itself. It’s the growing distance between systems and the people expected to use them.Most people don’t fear new tools. They fear feeling confused, exposed, or quietly blamed when something goes wrong. Interfaces that feel like tests instead of tools. Processes that demand compliance without clarity.When that happens, behavior changes. People hesitate. They disengage. Trust fades — not just in the system, but in themselves.That’s why human-centered design isn’t a preference. It’s a necessity.Behind every click is a nervous system carrying stress, memory, and responsibility. When systems are clear, predictable, and respectful, people don’t resist change — they stabilize. They think more clearly. They breathe.Burnout isn’t resistance to innovation. It’s drowning in it.At CortexForge, we don’t design systems to push people harder. We design them to carry complexity so people can carry meaning.Because the future of work won’t be built by faster tools alone — it will be built by systems that remember the humans inside them.

The Parallel Enterprise

Data Sovereignty in Practice

Every company has the same problem: Everyone is using too many apps that don’t talk to each other. So people waste time copying, pasting, searching, guessing, and repeating the same work in five different places. Data sovereignty means everything finally works together — in one place — so nothing gets lost.This isn’t one tool — it’s the whole workflow layer: emails, reports, approvals, incidents, training.One system. One solution. Every department.

___________________________________Fragmented Systems, Fragmented Insight

For years, enterprises have depended on a patchwork of specialized vendors — one for IT service management, another for HR, another for health and safety. Each delivers localized value but blocks visibility between domains. This fragmentation doesn’t protect organizations — it blinds them. When every dataset lives in a separate portal, leadership can’t see how one event influences another.Ownership without visibility is just another form of dependency.

___________________________________Mapping the Invisible

Imagine if every IT ticket carried a GPS coordinate. Within minutes, you could visualize clusters of issues on a floor plan — a wing where hardware failures or connectivity problems concentrate. That single insight, drawn from one dataset, reveals what becomes possible when an enterprise can see its information in parallel.This is what happens when ownership meets awareness.

___________________________________Cross-Referencing for Awareness

When an organization owns its data end-to-end, that same precision becomes possible across departments. HR incidents, maintenance logs, safety reports, and network analytics can all be analyzed within the same governance layer. Data sovereignty means every department contributes its full fidelity — not vendor-filtered summaries. Cross-referencing events in real time exposes relationships that were once invisible. Even when no trend appears, the organization still gains something new: clarity.Awareness itself is an operational asset.

___________________________________Connecting Domains in Context

Consider an older section of a facility where multiple issues overlap. Maintenance reports repeated complaints about poor lighting. Security flagged the same zone as higher risk due to limited CCTV coverage. Weeks later, HR logged an internal theft involving employees from that area. Individually, each team responded within its own scope. Viewed together on a shared map, the connection is immediate: one environmental condition created multiple risks. Data sovereignty allows organizations to see context, not just categories.Cross-domain visibility doesn’t predict the future — it prevents its repetition.

___________________________________Eliminating Vendor Lock-In

Data sovereignty removes dependency. Instead of outsourcing storage, structure, or updates to third-party providers, the organization governs its own environment. Licensing through platforms such as Microsoft provides the infrastructure, but control of the data — and how it evolves — remains internal. When reporting needs change, new categories or workflows can be added instantly without vendor intervention. This autonomy also lowers privacy risk: sensitive information never passes through external systems, and auditability remains intact.Vendor freedom is not luxury — it’s operational hygiene.

___________________________________Invisible, Yet Absolute

Data sovereignty embodies the principle “invisible, yet absolute.” It is invisible because when systems operate without exposed APIs or external dependencies, they are not presenting targets to the outside world. The environment is closed by design, not by restriction. It is absolute because visibility and sharing become intentional acts. Executives decide what to expose, to whom, and when. Privacy becomes the baseline. Exposure becomes a choice.

The result is an enterprise that moves quietly, governs confidently, and adapts without permission.Data sovereignty turns disconnected departments into one intelligence layer.

From Task Automation to Psychological Foresight

Most enterprise software assumes people are calm, informed, and uninterrupted. That’s rarely true.In reality, leaders make decisions while juggling incomplete information, time pressure, and constant interruption. When systems are fragmented or inconsistent, that pressure compounds. You stop trusting the numbers on the screen. Decisions slow. Foresight turns into hope.This is why task automation matters more than we usually admit.Automation isn’t just about removing work from someone’s plate. When a task is automated properly, it becomes structured. The variables are defined. The process is consistent. And the outcome is reliable. The work isn’t just faster — it’s done at a higher calibre, every time.When enough tasks are automated this way, something interesting happens. Leadership gains clarity without effort. Data arrives already structured, already validated, already continuous from the front line to the executive level. There’s no manual compiling. No interpretation drift. No middle layer needed to “explain” the numbers.That’s where psychological foresight comes from.Not intuition. Not resilience training. But trust in the system itself. When leaders can rely on what they see, they can pause, think clearly, and act calmly — even under pressure.Your software shouldn’t demand resilience from your team. It should create it.

Ethical AI:

Termination Is Governance

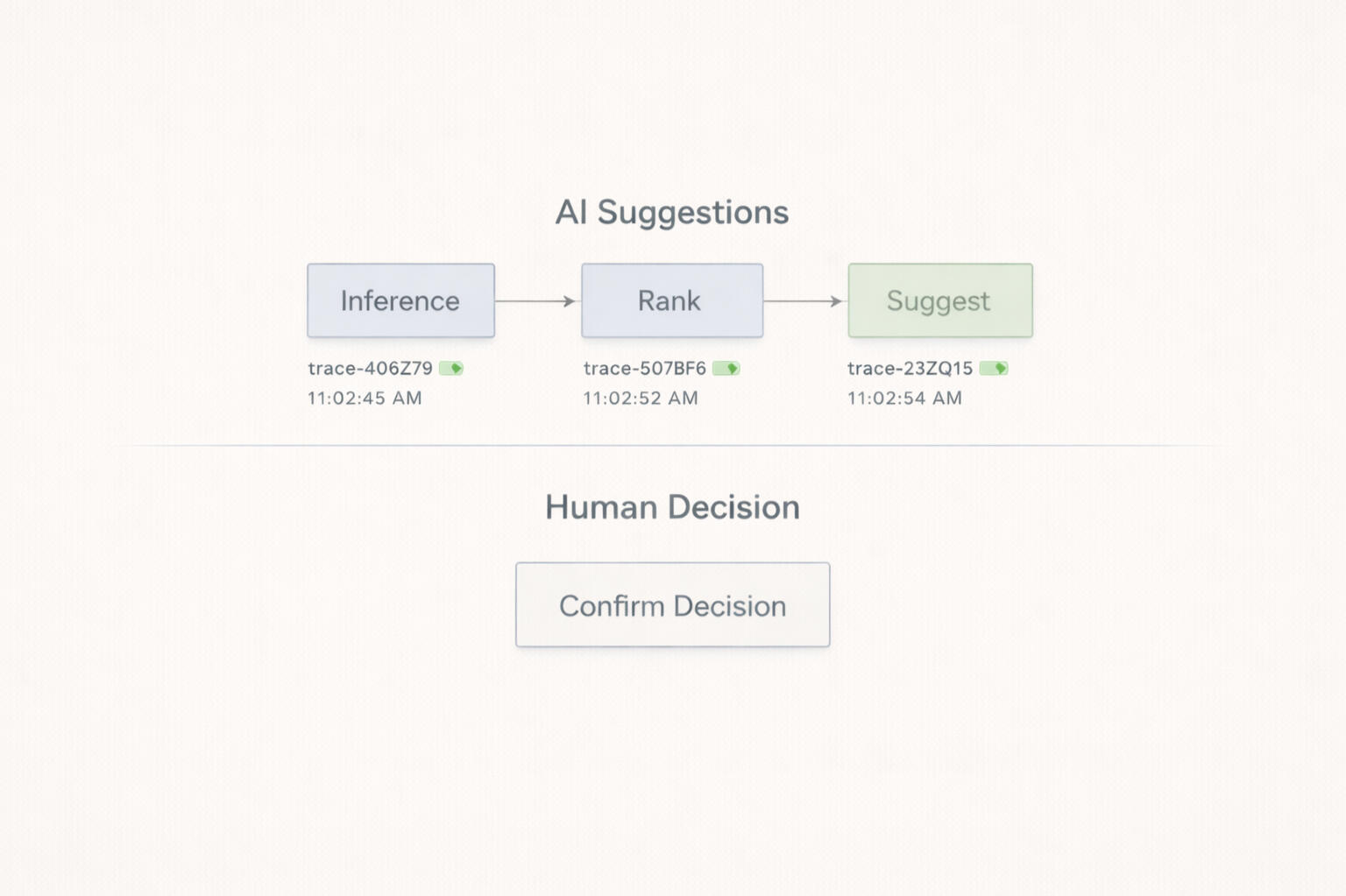

If you can’t clearly say who’s responsible and how the system stops, you don’t have “ethical AI.” You have automation with plausible deniability. In practice, ethics isn’t a vibe — it’s authority, boundaries, and traceability built into the workflow.

___________________________________Most failures don’t come from “bad intelligence.” They come from unclear coordination. When humans and systems operate in parallel without explicit rules, both assume the other is handling it—and responsibility disappears into the gap.

___________________________________So the first ethical move is boring (and foundational): clear handoffs. Who acts first. Who verifies. Who escalates. Who owns the final outcome. If humans and systems operate in parallel without a contract, both will assume the other is holding the wheel. Responsibility cannot be shared at the point of execution.Coordination defines responsibility.

___________________________________Then you draw the most important line: hard stops. Termination boundaries define what automation is forbidden to do, and the precise conditions where autonomous execution halts immediately — regardless of confidence, urgency, or pattern strength. This isn’t “being cautious.” It’s governance. Termination is not failure. Termination is governance.Termination is structural.

___________________________________From there, you protect the domains that should never be flattened into metrics. Systems can prepare context, summarize, and recommend — but they cannot finalize protected human judgments: discipline, care and wellbeing decisions, moral interpretation, context-sensitive leadership calls, and anything with irreversible human impact. Put simply: efficiency never outranks dignity. If a decision defines a human outcome, it cannot be finalized by a machine.Efficiency never outranks dignity.

___________________________________Next is how trust survives time: auditability and accountability chains. Every recommendation, decision, approval, and override must be recorded with an owner. If an action can’t answer “why” later—if it’s untraceable, anonymous, or contextless—then it shouldn’t be able to occur at all.If it can't answer "why" it shouldn't occur.

___________________________________Finally enforceability comes from architecture, not aspiration. Constrain the intelligence layer: scope its data access, define permitted inference, prohibit specific cross-references, and separate visibility from execution by design. Capability does not imply permission — architecture does.Capability does not imply permission.

___________________________________Ethics must hold under stress conditions, not just during steady state. Preserve intent as an operational constraint. Protect attention with cognitive load ceilings. Require graceful degradation when uncertainty rises. When confidence drops, authority retracts and scope narrows — because silent failure is the one failure mode institutions can’t survive.

___________________________________In the end, ethics is a control layer. A designed hierarchy where humans outrank systems, escalation is non-bypassable, and termination is always available. When governance is structural, intelligence becomes usable and scalable — because it stays inside the boundaries that trust requires.

___________________________________Full framework (boundaries, escalation rules, audit chains)

The Invisible Intelligence

A single incident becomes many operational realities.Most people still imagine AI as something visible. A chatbot. A prompt box. A dashboard. A system you have to consciously go use.But the most powerful intelligence in an organization often does not look like AI at all.It looks like orchestration.The right people being informed at the right time, with the right amount of context, without anyone needing to manually assemble the chain.

___________________________________What this looks like in practice

A student fight breaks out at a university. A window is broken. Security responds. Photos are taken. The report is completed on scene, before the officer even leaves the area.What matters is what happens next.In most organizations, the same incident now has to be manually translated across multiple domains. Security writes the report. Someone emails facilities. Someone decides whether Student Services should know. Someone else determines whether Counselling follow-up is appropriate. Leadership may not see it until much later, depending on who notices the severity and forwards it.The incident happened once. But the coordination burden gets recreated five different times.This is where invisible intelligence matters.In a well-structured environment, the system does not just store the report. It understands the organizational shape of the event.Facilities do not need the full security narrative. They need a facilities-relevant version: broken window, location, damage context, urgency.Student Services does not need every operational detail. They need a high-level notification that a specific student was involved in a security-related incident and may require follow-up.Counselling services may require slightly more contextual detail to assess whether outreach is appropriate.Security leadership needs the full report. And if the event crosses a severity threshold, the system can immediately triage it for manager or director review inside the platform itself, without relying on someone to forward an email or rebuild the story manually.Same event. Different visibility. Different responsibilities. One governed coordination layer.That is what invisible intelligence looks like.

___________________________________The real shift

A system that knows how information should move.That is where enterprise AI becomes operational.Because the real friction inside institutions is rarely a lack of raw information. It is the failure to move the right information to the right people under the right rules.When that movement is manual, coordination depends on memory. When that movement is governed, coordination becomes infrastructure.And invisible does not mean uncontrolled.The system still needs hard boundaries: who gets notified, what they are allowed to see, what must remain inside security, what can trigger leadership review, and where human judgment remains non-transferable.AI can prepare, route, redact, and triage. Human authority still decides.The future of enterprise AI may be the kind no one talks about — because the organization simply starts moving with more clarity, more precision, and less friction.

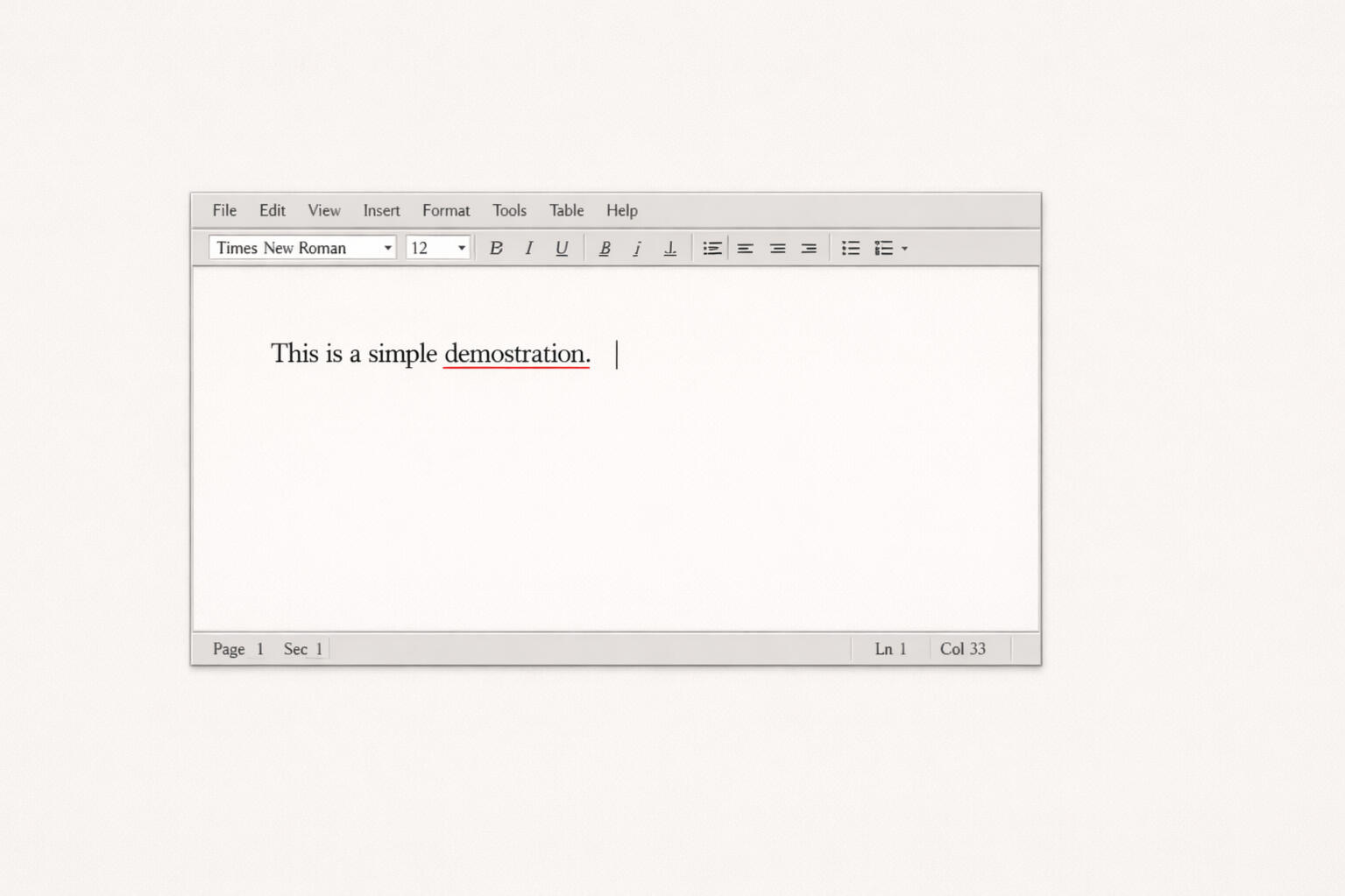

1998-2005

Small Helpers

Computers Start Assisting

Spellcheck underlined mistakes.

Autocorrect fixed words as you typed.It wasn’t smart — just helpful.Why this era matters:

It showed that people like technology when it quietly helps them.

1998-2005

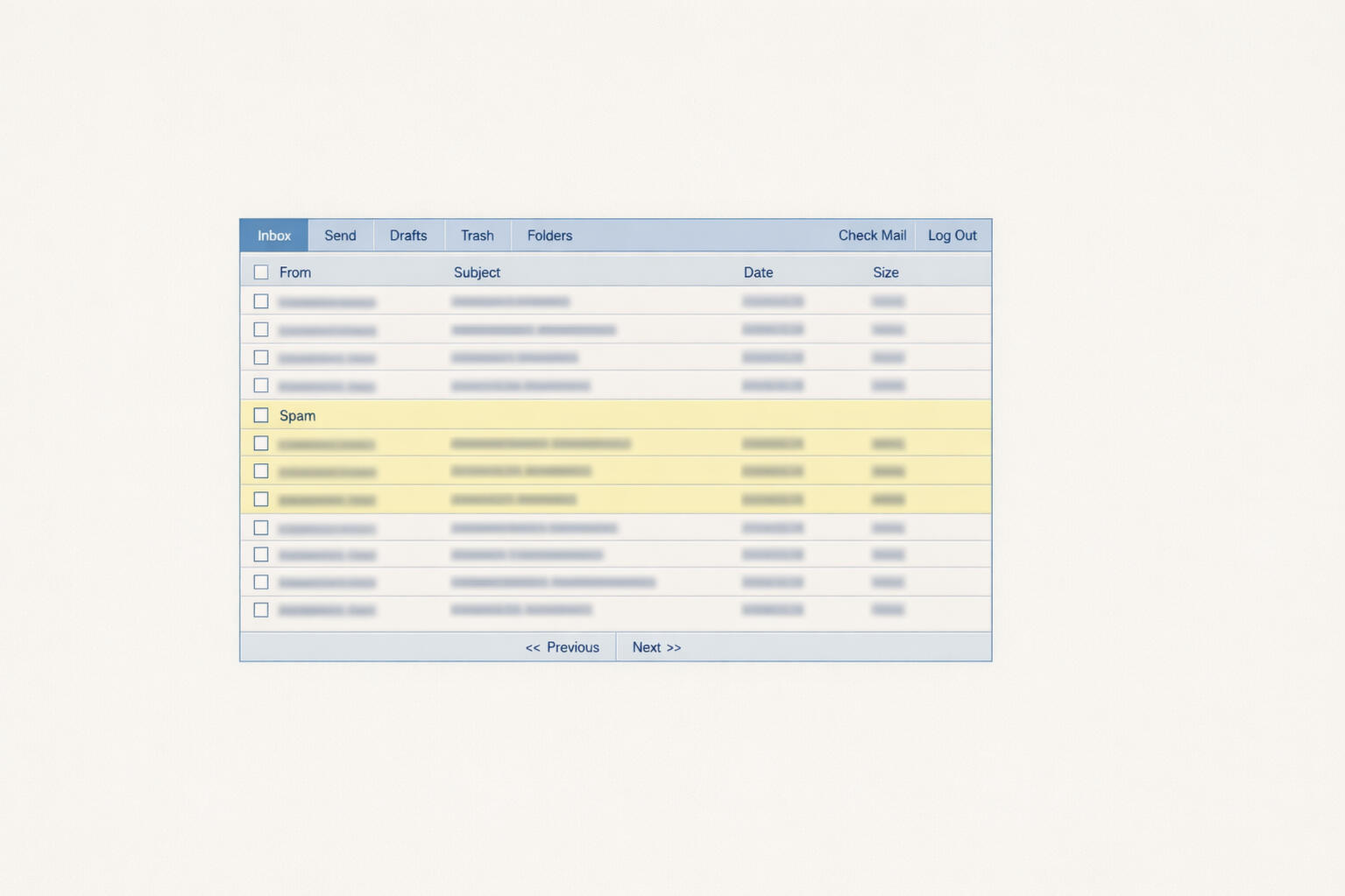

2006-2012

Pattern Spotting

Software Notices Habits

Email learned what spam looked like.

Search results improved based on clicks.The system didn’t understand you —

but it noticed patterns.Why this era matters:

It proved computers could learn simple behaviors from use.

2006-2012

2013-2016

Rules and Automation

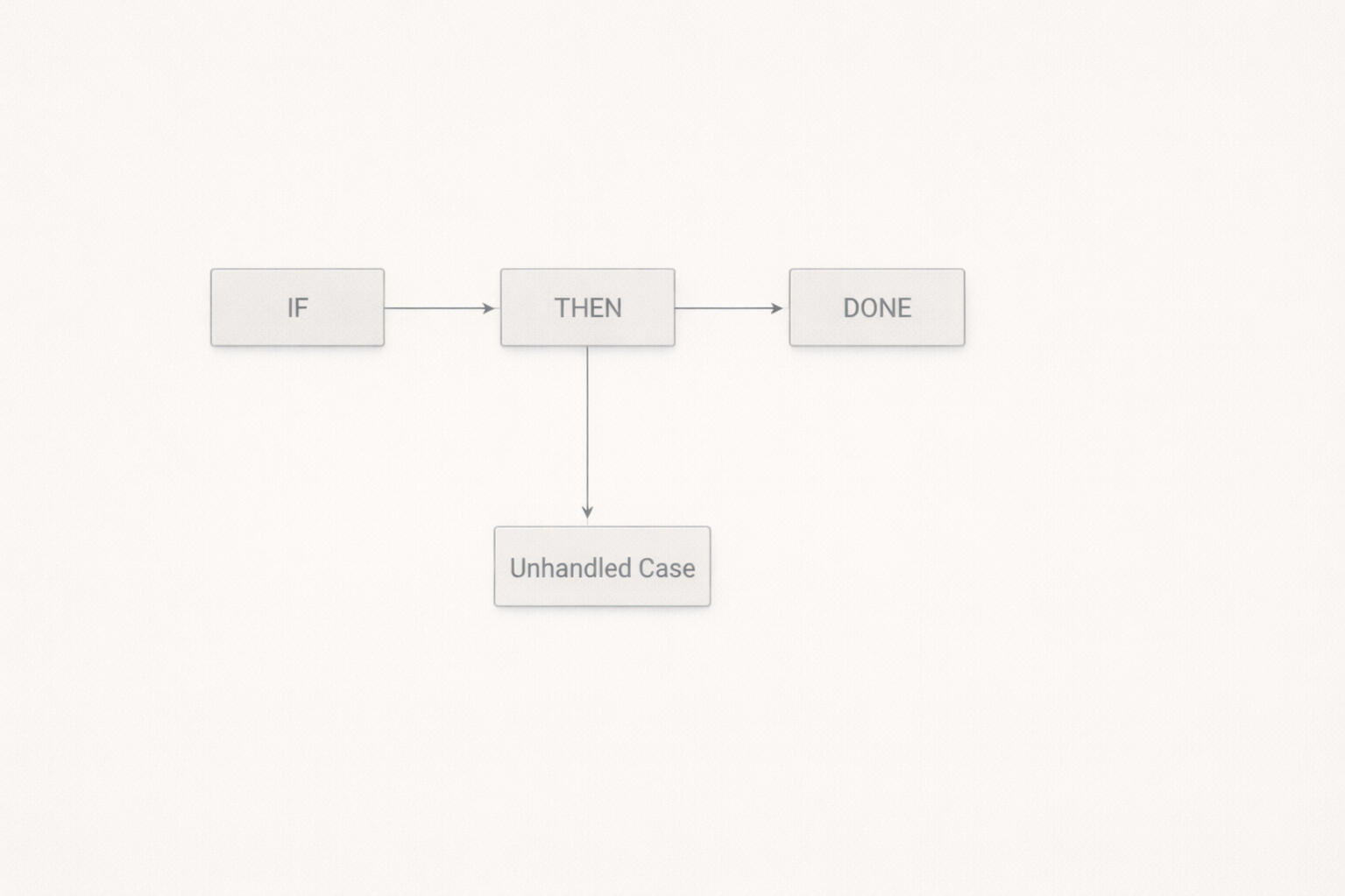

If This, Then That

Workflows became automated.

Tasks ran on schedules.Everything worked —

until the situation changed.Why this era matters:

It revealed that rigid automation breaks in real life.

2013-2016

2017-2019

AI Gets a Name

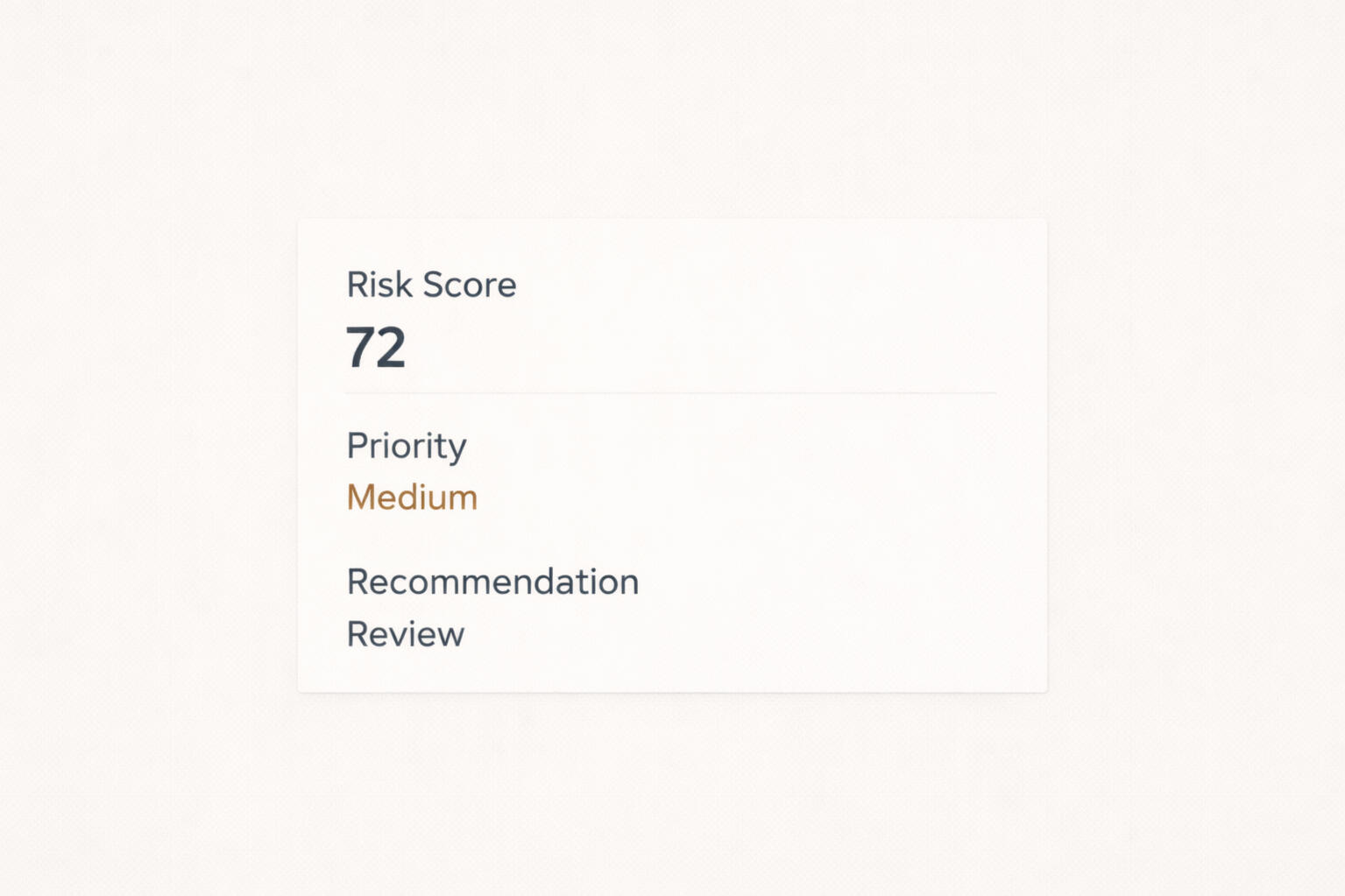

Predictions Appear

Software started scoring, ranking, and predicting.

“Risk levels.” “Recommendations.”Most people didn’t know how it decided.Why this era matters:

Intelligence arrived before trust did.

2017-2019

2020-2021

Pressure Test

Reality Interferes

Sudden change confused models.

Automation behaved unpredictably.

Alerts piled up.People stopped trusting the outputs.Why this era matters:

It showed that AI fails fast when the world changes.

2020-2021

2022-2023

Useful AI

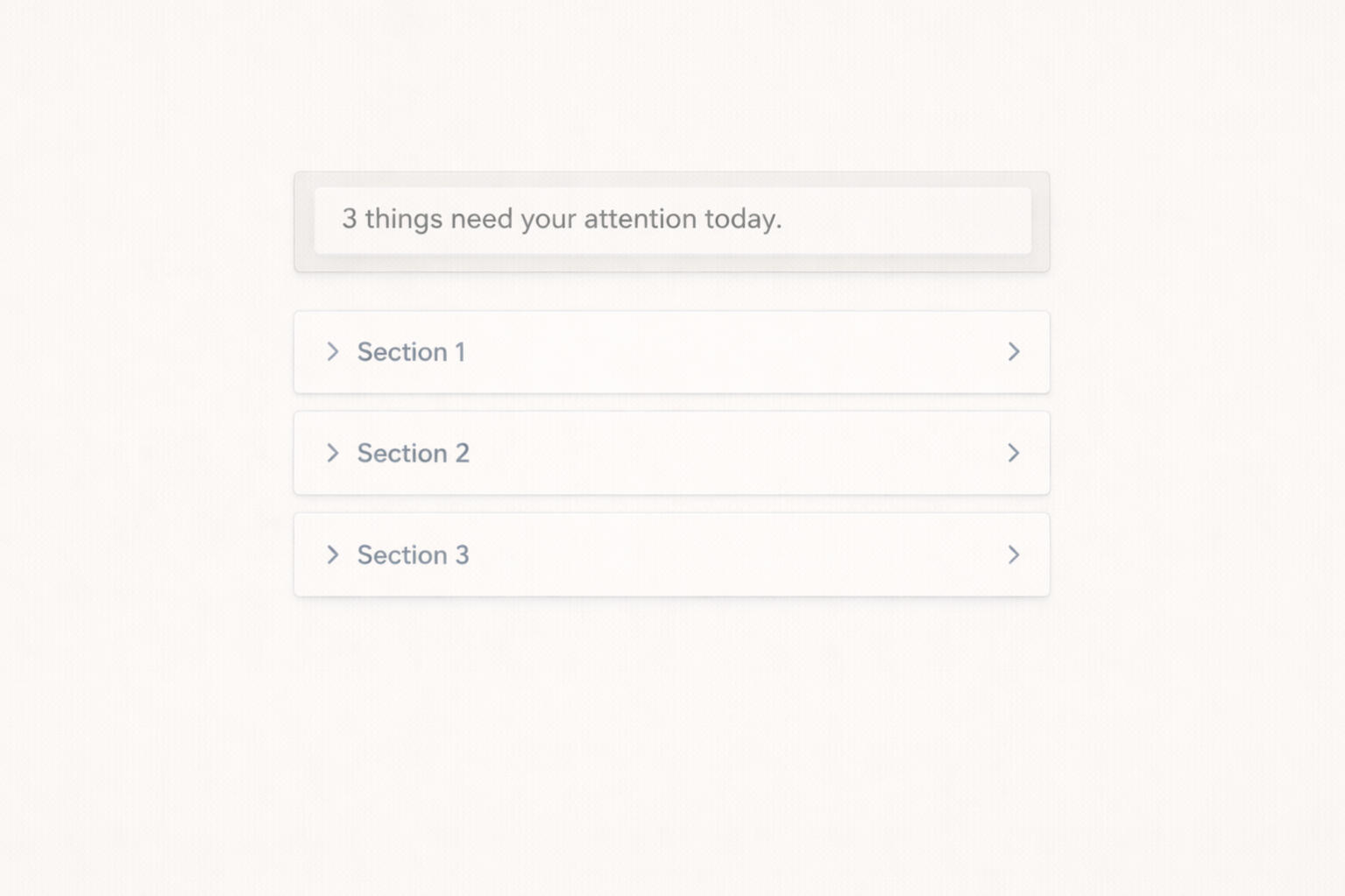

Help, Not Control

AI summarized emails and reports.

It highlighted what mattered.People stayed responsible for decisions.Why this era matters:

AI worked best when it reduced mental load.

2022-2023

2024-2025

AI With Limits

Clear Boundaries

AI was allowed to assist — not act alone.

Logs existed. Decisions were traceable.Trust started to return.Why this era matters:

Limits made intelligence safer and more reliable.

2024-2025

2026

Sovereign AI

Boring and Reliable

AI runs inside systems you own.

Data stays put.

Automation handles routine work quietly.Most days, no one thinks about it.

That’s the point.Why this era matters:

Real AI doesn’t impress — it just works.

Horizon

2015-2017

Function Under Pressure

Survival Interfaces

Most critical systems were built for trained staff working under stress.

Interfaces were dense, text-heavy, and unforgiving. Speed mattered more than comfort.These systems worked—but only if the human could keep up.Why this era matters:

Demonstrated that reliability alone isn’t enough when people are exhausted or overwhelmed.

2015-2017

2018-2019

Tools Without Cohesion

Fragmented Digitization

Organizations rapidly adopted new software to improve efficiency.

Instead, many ended up with disconnected tools, duplicate data, and constant alerts.Nothing fully failed—but everything became harder to manage.Why this era matters:

Revealed that adding technology without coordination increases mental load.

2018-2019

2020

When Systems Break

Pressure Test

Workflows changed almost overnight.

Systems built for stable conditions were pushed into emergency use.Automation behaved unpredictably.

Information lacked context.

Decision-making became stressful.Why this era matters:

It proved that systems designed only for normal conditions fail precisely when they are most needed.

2020

2021-2022

Calm Becomes a Requirement

Intentional Simplification

Burnout became impossible to ignore.

Design started focusing on reducing cognitive strain, not just adding features.Interfaces became simpler.

Language became clearer.

Workflows became shorter.Why this era matters:

It showed that calm is not aesthetic — it is a prerequisite for accuracy, safety, and trust.

2021-2022

2023-2024

Thinking Without Overreach

Intelligence With Restraint

AI became widely accessible, but so did concern about misuse.

Organizations wanted help—not loss of control.Successful systems used AI to summarize, highlight, and assist—without replacing judgment.Why this era matters:

It established that intelligence without boundaries erodes trust faster than it creates value.

2023-2024

2025

Capability, Experienced

Keystone & Coherence

CortexForge treats experience as the primary interface.

Systems are visible, understandable, and stable

as they operate.Understanding comes from interaction.

Coherence keeps complexity in check.Why this era matters:

What users experience is what the system is.

2025

2026

Trust, Over Time

These systems were not designed for launch.

They were designed for years.

Horizon

2014–2016

Command Language

Compliance First

Language was written to control outcomes.

Short. Directive. Legal-heavy.

People were expected to “know the rules.”Understanding was assumed — not supported.Why this era matters:

Early digital systems inherited military, legal, and industrial language patterns.

2014–2016

2017–2019

Template Expansion

Standardized, Detached

Organizations scaled fast.

Scripts, templates, and approved phrases multiplied.

Language became consistent — but impersonal.Messages were correct, yet emotionally flat.Why this era matters:

Efficiency increased, trust quietly declined.

2017–2019

2020

Language Under Crisis

Tone Breakdown

COVID exposed language failures instantly.

Guidance changed daily.

Instructions conflicted.

Tone escalated stress instead of reducing it.People didn’t feel informed — they felt managed.Why this era matters:

This is when leaders realized language shapes nervous systems.

2020

2021–Mid 2022

Clarity Over Authority

Explaining, Not Ordering

Burnout became visible.

Language softened without losing precision.

Explanations replaced commands.Tone became a performance factor.Why this era matters:

Clear language began improving compliance, safety, and accuracy.

2021–Mid 2022

Late 2022–2023

AI Touches Language

Assistance, Not Voice Replacement

AI began drafting, summarizing, and highlighting.

The best systems stayed human-authored at the edges.Trust depended on restraint, not fluency.Why this era matters:

Organizations learned that who speaks still matters.

Late 2022–2023

2024–2025

Trauma-Informed Language

Meaning Preserved

Language became part of system design.

Neutral. Grounded. Non-coercive.

Written to reduce cognitive load — not impress.CortexForge embeds language that supports decision-making, not pressure.Why this era matters:

Language became infrastructure, not copy.

2024–2025

2026

Stewarded Language

Stable Over Time

Tone survives staff turnover.

Meaning remains intact years later.

Language evolves without erasing intent.Words stop drifting.

Horizon

2013–2015

Physical Presence

People as the System

Incidents were handled face-to-face.

Paper logs. Radios. Phone calls.

Knowledge lived in people’s heads.Systems worked — until people were unavailable.Why this era matters:

Reliability depended entirely on humans.

2013–2015

2016–2018

Digital Entry Begins

Forms Replace Paper

Online forms and databases appeared.

Reporting improved — response did not.Data arrived faster than decisions.Why this era matters:

Storage improved, coordination lagged.

2016–2018

2019

Tool Proliferation

Nothing Talks to Anything

Departments adopted specialized software.

Alerts multiplied.

Ownership blurred.Nothing failed — everything slowed.Why this era matters:

Fragmentation quietly increased cognitive load.

2019

2020

System Stress Test

Channels Collapse

Emergency conditions overwhelmed tools.

Phones rang endlessly.

Emails stacked.

Automation behaved unpredictably.This exposed that systems weren’t built for uncertainty.Why this era matters:

It revealed that systems optimized for normal operations fail when context changes suddenly.

2020

2021–Early 2022

Workflow Awareness

Routing Matters

Organizations began mapping how work flows.

Escalation paths were defined.

Authority needed visibility.Systems started supporting decisions — not just records.Why this era matters:

It marked the shift from data collection to intentional coordination.

2021–Early 2022

Late 2022–2023

Assisted Operations

AI as Support

AI triaged, summarized, and flagged signals.

Humans still decided.The win wasn’t speed — it was focus.Why this era matters:

It proved AI adds value when it reduces noise, not when it replaces judgment.

Late 2022–2023

2024–2025

Coordinated Intelligence

CortexForge Systems

Signals route automatically with context intact.

Humans receive what matters — when it matters.

Escalation is intentional. Nothing surprises.Systems finally work with people.Why this era matters:

It demonstrates that intelligence becomes trustworthy when coordination is designed, not assumed.

2024–2025

2026

Trust Over Time

Systems That Mature

Processes evolve without disruption.

Historical data stays readable.

Automation remains calm, governed, and explainable.Systems age — without decay.

Horizon